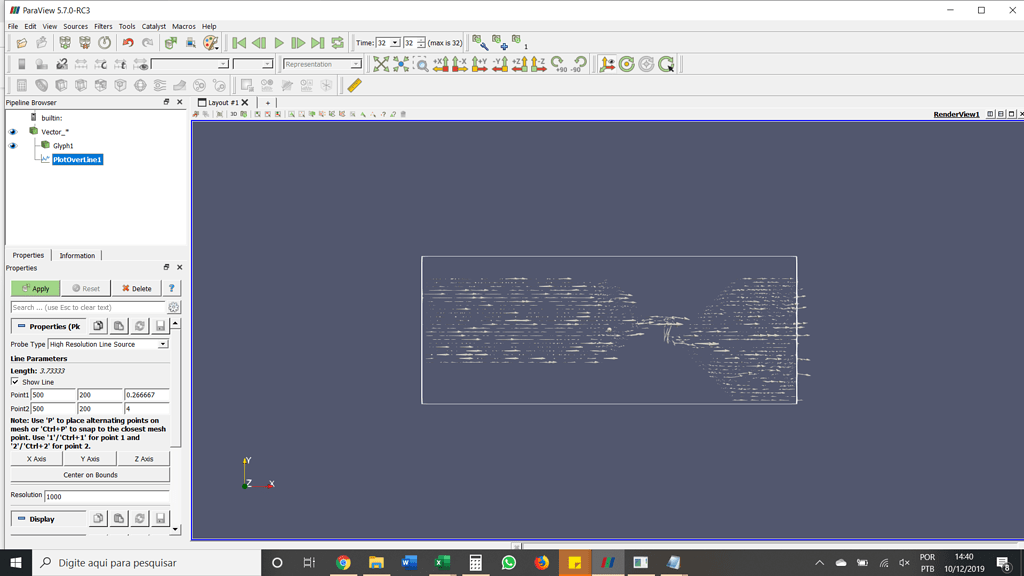

If the progress bar is moving slowly at some point, the name on it can be a good indication of what is taking some time. When executing the pipeline, ParaView’s progress bar will move multiple times from left to right, each time associated with a specific name. If a user’s evaluation of the ParaView’s slowness is already a good piece of information, paraview also provides tools to refine this evaluation in order to make better decisions afterwards. A slowness here is probably related to data rendering. When a user stops interacting with the view, a single still render will be performed (by default). A slowness here is probably related to data rendering or network connection. When a user interacts with the view, interactive renders will be performed (by default). A slow response in this case could be induced by many factors and a refinement of the evaluation will be needed. When a user steps in the animation, all filters and readers dealing with temporal data will be executed and a rendering will be performed. When a user clicks Apply in ParaView, all non up-to-date filters and readers will be executed and a rendering will be performed. Identifying these moments can be very helpful. In order to be able to answer this question, each user will need to evaluate their workflow and understand what is limiting the workflow and see how to improve it.įirst, while evaluating of existing workflows, a user can see slowdowns of ParaView at different moments. Rendering: Transforming a 3D scene into a 2D image, usually very efficiently performed by the GPU, but not necessarily.pvserver: ParaView server executable, which is usually run with MPI in a HPC environment.

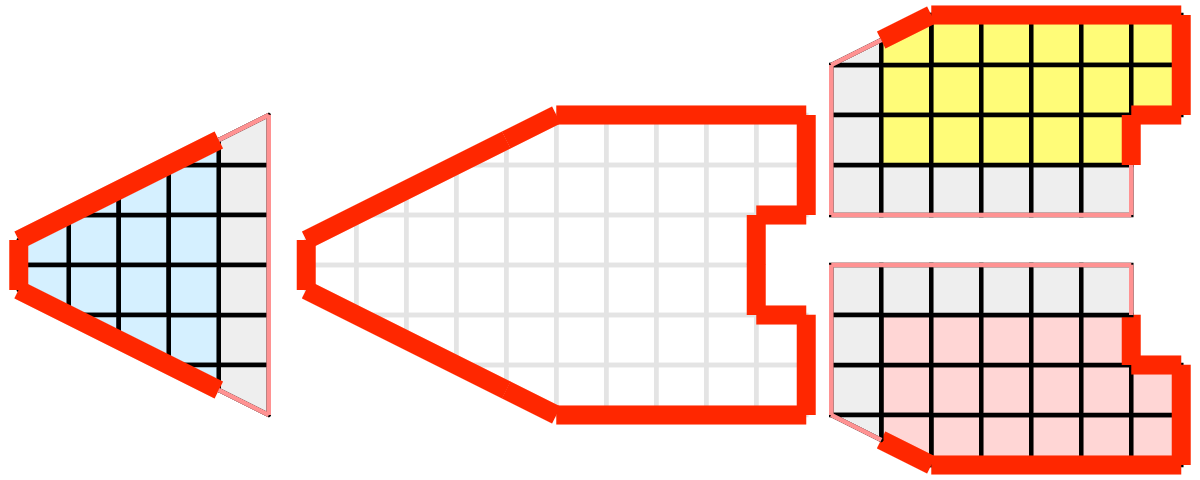

Pipeline: Series of ParaView filters organised in a direct acyclic graph and executed by ParaView.MultiThread implementations are typically TBB or OpenMP. MultiThread: Parallel execution of a code (on CPU) within a single node, using potentially all the node’s core.MPI: Message Passing Interface, a node-to-node communication standard used in all HPC, multiple implementations exists, OpenMPI, MPICH and many others.Headless: Rendering without a graphical environment (like Xorg server), which can use GPU or CPU.Distributed Data: When working with a distributed server, ParaView will usually expect the data to be spatially distributed on each server so that each server processes and renders only part of the data.Distributed: Distributed execution of a code is when a code is run on multiple nodes or multiple cores, typically using a MPI implementation.First, some vocabulary will be necessary. In order to figure out how many nodes and cores per node one should use when using ParaView in a distributed environment, many notions will need to be understood and the whole user workflow will need to be evaluated. This blog post will try to answer this question in order for HPC administrators and users to be able to make these decisions and use ParaView to its fullest potential. This very simple question has a very complex answer, which depends on many parameters from the hardware being used, to the type of datasets being processed, or the post processing filters in use. When deploying ParaView in an HPC environment, once all the technical requirements are met, everything is working perfectly and users start to try and use ParaView for actual data post-processing, one of the first questions that may come to mind is how many cores and nodes should be used by ParaView servers ?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed